Tag: recognition

-

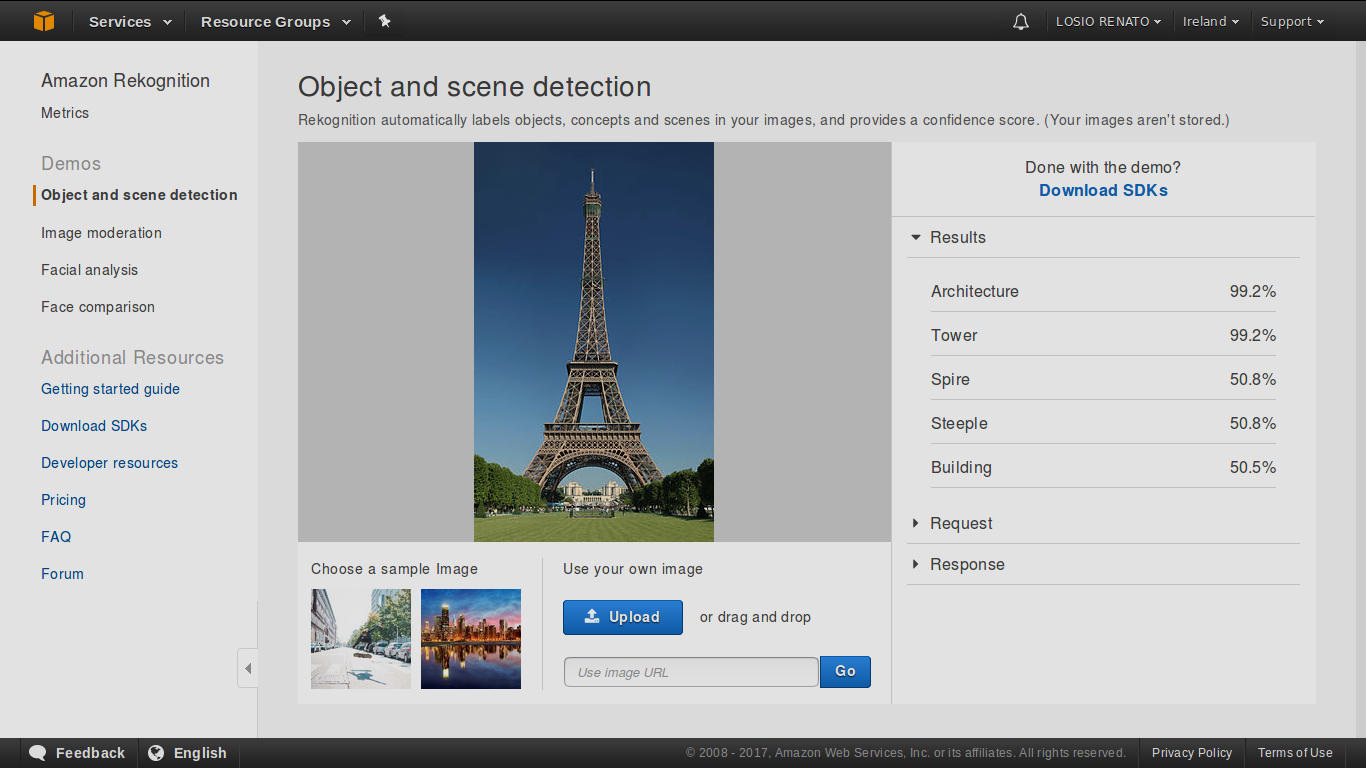

Presentation at Berlin AWS User Group

Here are the slides of my presentation last night at the Berlin AWS User Group on using Amazon Rekognition to findRead the full article.

-

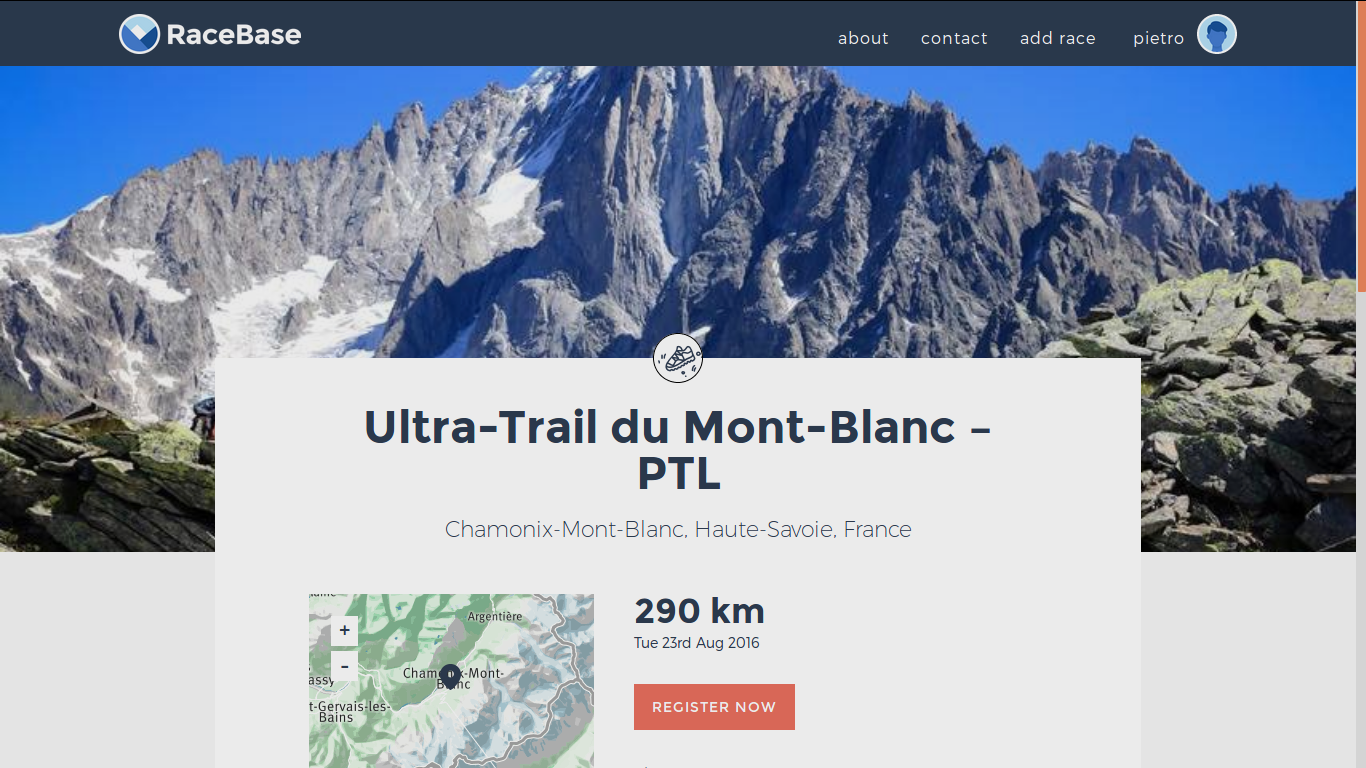

Location-based services and countries on AWS

A few weeks ago I covered my experience and challenges working with geolocation technologies for Funambol and RaceBase World, asRead the full article.

-

Finding marathon cheats using Amazon Rekognition

In the last few weeks many articles have been published about the problem of cheating in the biggest running events,Read the full article.