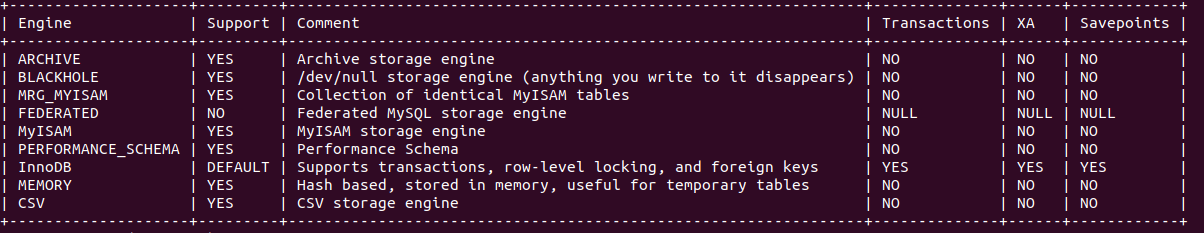

Tag: mysql

-

Amazon Aurora is Now 60 Times Faster than RDS for MySQL. Really.

Unlike traditional benchmarks that are lengthy and complex, this evaluation will be concise, comprising only about 200 words and taking just a couple of minutes to review the results.Read the full article.

-

Blue/Green Deployments for RDS: How Fast is a Switchover?

AWS recently announced the general availability of RDS Blue/Green Deployments, a new feature for RDS and Aurora to perform blue/green database updates. One of the aspects that caught my eye is how fast a switchover is. Read the full article.

-

The best way to optimize IOPS on RDS MySQL

Best way to optimize IOPS and have fewer problems with data is not to have the data in the first place.Read the full article.

-

ADDO 2022: Drawing the NYC Skyline with a serverless database

I am pleased to be virtually on stage at All Day DevOps for the third year in a row onRead the full article.

-

The Future of Relational Databases on the Cloud

I really enjoyed being yesterday one of the keynote speakers at the virtual Cloud Computing Conference Greece. Thanks Grigoris forRead the full article.

-

Cloud Computing Conference Greece

In a week time I will be a keynote speaker at the Cloud Computing Conference organized by Boussias in Greece.Read the full article.

-

Live in Vegas

Can we draw the New York City skyline with Aurora Serverless v2? An exciting day being on stage at re:InventRead the full article.