Tag: rds

-

Amazon Aurora is Now 60 Times Faster than RDS for MySQL. Really.

Unlike traditional benchmarks that are lengthy and complex, this evaluation will be concise, comprising only about 200 words and taking just a couple of minutes to review the results.Read the full article.

-

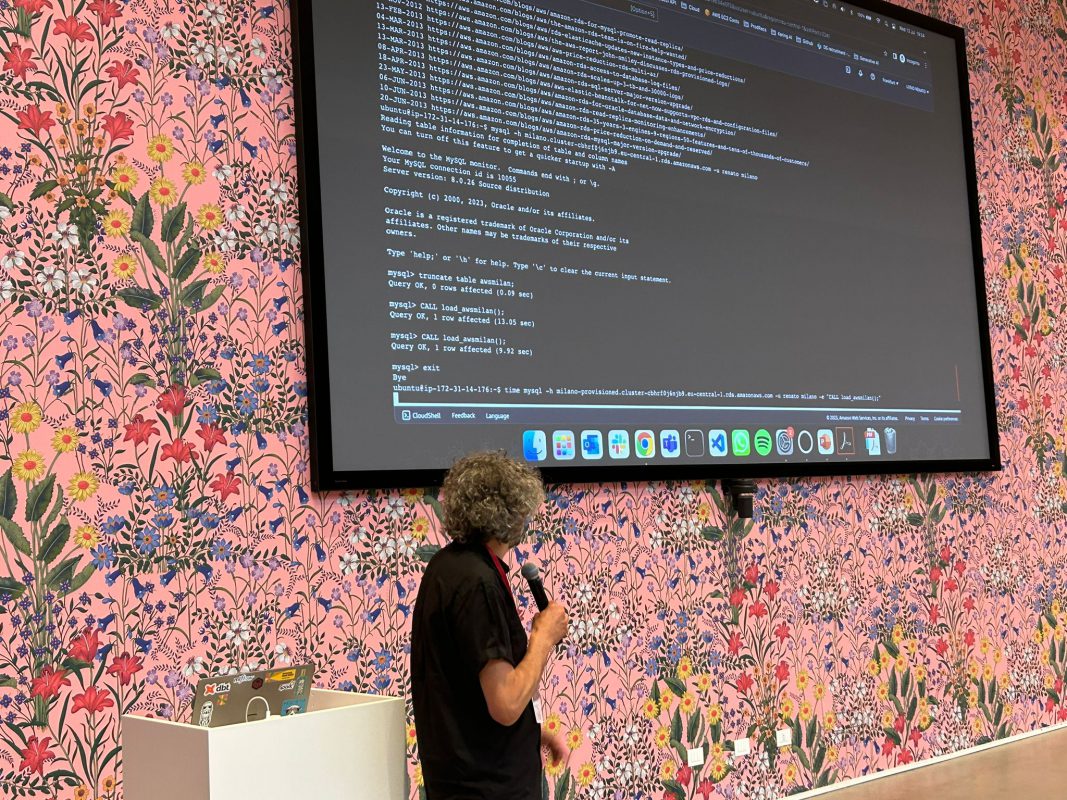

Renato @ AWS User Group Milano

This week I had the pleasure to talk about RDS, my favorite AWS service, at the AWS User Group in Milan. Read the full article.

-

Unlocking the Secrets of the Magic Number 35

The maximum automatic backup retention period on RDS and many other cloud services is 35 days. But what is so special about 35?Read the full article.

-

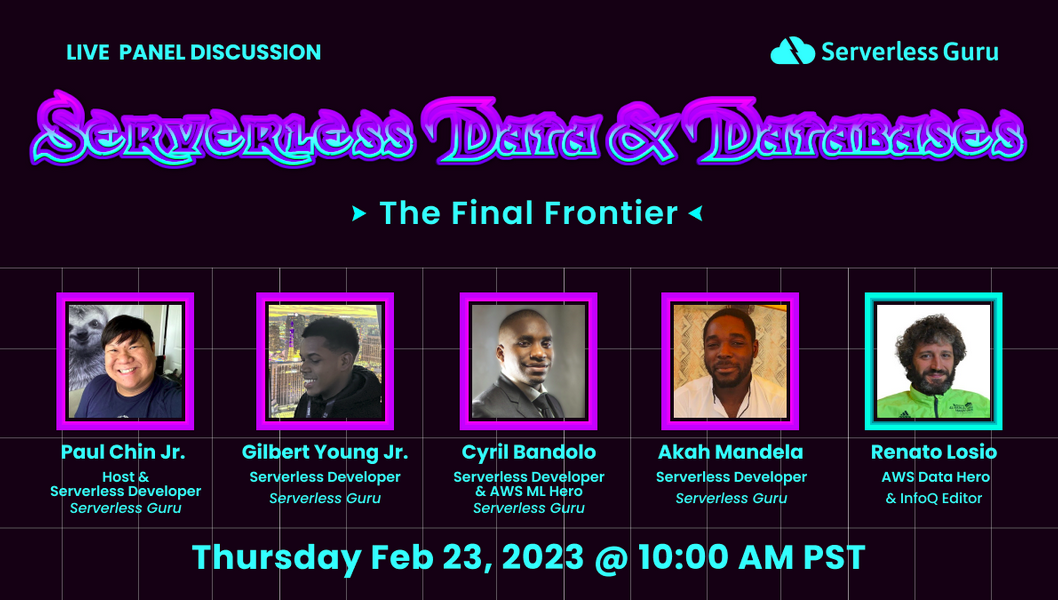

Serverless Panel: Data & Databases

Serverless Guru Panel: Data & Databases. See why Databases are the “next frontier” with Special Guest Renato Losio Read the full article.

-

InfoQ – December 2022

From Autoclass for Cloud Storage on Google Cloud to VPC Lattice, from SkyPilot to re:Invent: a recap of my articles for InfoQ in December.Read the full article.

-

Blue/Green Deployments for RDS: How Fast is a Switchover?

AWS recently announced the general availability of RDS Blue/Green Deployments, a new feature for RDS and Aurora to perform blue/green database updates. One of the aspects that caught my eye is how fast a switchover is. Read the full article.

-

Serverless Architecture Conference London 2023

London is a unique city for me, I cannot forget many years studying and working there. I always love toRead the full article.